Computer vision transforms checkout processes in retail

by Julia Pott (exclusively for EuroShop.mag)

The automated assessment of video footage via machine vision or computer vision has drastically evolved over the years. The technology is also transforming the retail industry where it is leveraged in checkout applications.

We talked to Alex Siskos, VP of Strategy at Evenseen, Toby Awalt, Director of Product Marketing at Mashgin, and Paul Dennis, Store Operations Expert for SAI, about image recognition technologies and their application in the retail sector: What can AI see, how does it learn, and how does it optimize checkout processes?

The triumph of computer vision

In the last few years, the machine vision market has been growing at a record pace and has also taken the retail sector by storm. Paul Dennis tells us the technology has evolved and taken huge strides in recent years. He attributes some of this progress to the COVID-19 pandemic as it has put pressure on retailers to make their store processes contactless to improve customer safety. “The accuracy of the technology has come on leaps and bounds. Things like facial recognition, tracking people through stores, getting data and insights of how customers are behaving in stores and how store operations are [being carried out] are evolving tenfold and still going on today,” says Dennis.

Apart from applications that recognize people, there are solutions that specialize in object recognition. Technological advances and subsequent AI ‘training’ have presented brick-and-mortar retailers with ample opportunities to boost operations, enabling them to monitor and analyze nearly anything that happens inside the store. This applies to retail logistics, inventory, operations, and workforce management. The shopper’s in-store behavior can likewise be analyzed to affect store design and layout, category management or marketing campaigns. Awalt explains: “There’s a huge amount of powerful analytics that you can derive from watching people’s behavior – even anonymously.”

The camera recognizes the items and lists them on the screen. By scanning the QR code, the bill is transferred to the smartphone. // © beta-web/Giese

Self-scanning, seamless checkout, and loss prevention

According to Siskos, shrinkage, or shrink, and theft are the biggest causes of loss to retailers. “Close to 2% […] of theft takes place within retail, and nobody knew what percentage of that is actually happening at self-checkout. When you figure that number out, you’re able to save anywhere between 3,000 to 5,000 dollars per store per week.” Siskos and Dennis also attest that the main reason for some of their retail clients to adopt computer vision technologies was to combat shrink and discourage theft.

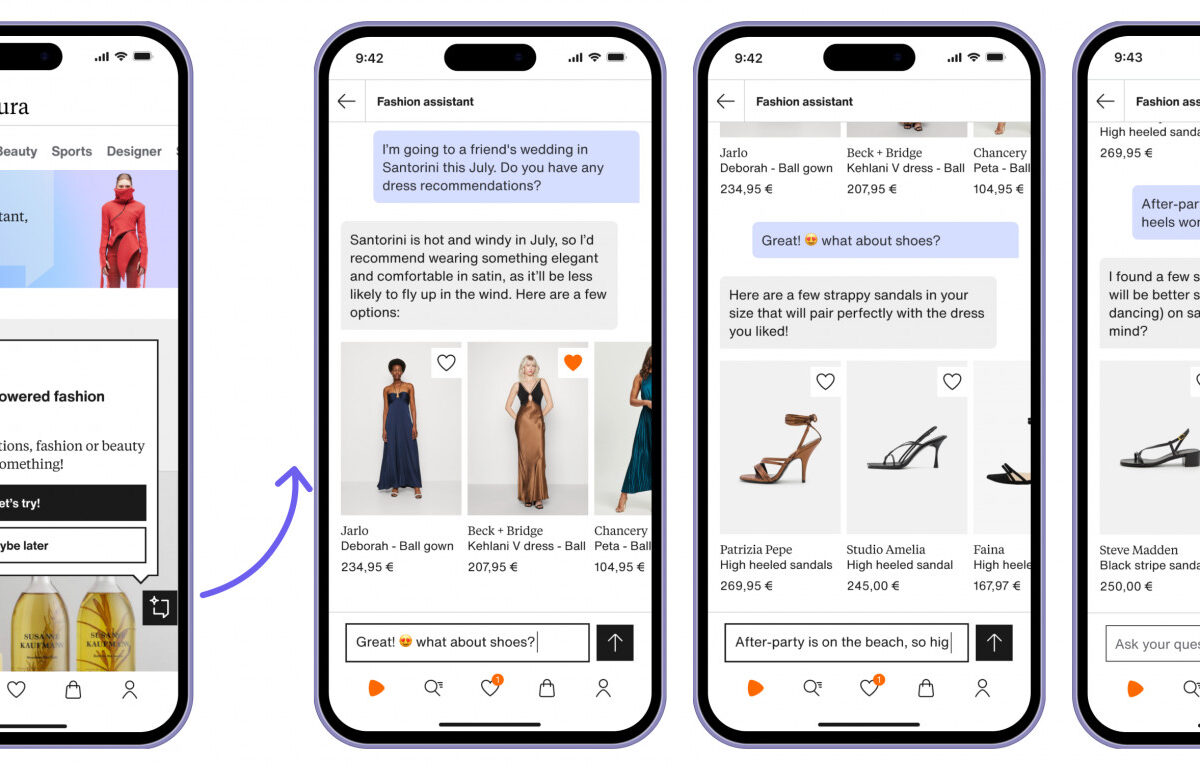

This technology can offer retailers valuable support, especially when it comes to checkout processes – whether it pertains to self-scanning and self-checkout solutions or “invisible checkouts” that allow customers to leave the store with their items and subsequently send a digital invoice. The companies of our interview partners – Eversen, Mashgin and SAI – specialize in this field. Dennis looks back and says, “our technology was born in retail. It actually came along from a request from a retailer asking us to identify items at checkout to make checkouts more secure. The retailer was worried about items being miss-scanned at checkouts [or] being stolen […].”

Employees can, for example, be notified of errors in the checkout process via smartwatch and check them immediately. // © beta-web/Giese

Dennis states camera surveillance and machine vision help retailers to recognize individual items and detect whether customers take them without paying. Dennis gives an example: “At self-checkout, one of the common [incidents] is a customer pretending to scan but not scanning an item. […]. We can detect that and send an alert to a security guard in real time so they can decide what to do.” According to Dennis, a big advantage is that there is no need to investigate and reconstruct the events in the laboratory since the technology allows retailers to monitor in-store incidents in real time and set up their models accordingly.

The AI learning process: Getting smarter with each article

The AI behind machine vision does not automatically understand what it sees. Just as human beings learn over the course of their lives what a bottle is and how it can look differently, so must AI. To do this, the technology compares recorded images with learned optical patterns and features.

Unlike individual images, video footage has an added temporal context: Is the bottle taken off the shelf or placed on the shelf? This is where recurrent neural networks (RNN) come in: they can compare sequential images and make inferences.

AI requires massive sets of data to learn and improve. New data must be created for each new added object.

Siskos explains the learning process: “[Our solution is] deployed across thousands of stores and an even larger number of self-checkouts. We ingest close to 175 years of video on a daily basis. The amount of data that we see gives us the context of what’s happening in retail every single day. [It’s] like you’re operating a car around the NASCAR track: Every time it goes around, you figure out what you want to tweak. Then you pull it back in, you tweak it and you let it back out in the field.”

The garment is placed in different positions under the camera and scanned in each case. The AI saves the scans and thus builds up a database // © beta-web/Giese

Retailers rightfully wonder how quickly and easily a system learns to recognize new items. Depending on the retail segment, product assortments tend to change frequently, sometimes even weekly, especially in the food or drugstore sector.

Siskos acknowledges the concerns of retailers: “All it takes is one brand to change the packaging and you’ve basically got a brand-new item.” AI implementation also faces other challenges. Awalt uses textiles to explain: “In object recognition you’re dealing with challenges like foldable objects: For example, a piece of clothing may shrivel and go in different contortions that are impossible for you to predict with an AI.” Awalt adds there is also a learning process as it pertains to objects that remain unchanged: “[A] three-dimensional understanding of objects lets us differentiate important things like size.” In doing so, items that look similar – such as coffee mugs or different energy drink bottles – can be distinguished via their exact dimensions.

It takes a variety of images for the technology to learn and identify three-dimensional objects, says Siskos. Initially, the first images are assigned to an item in the POS system. “We need the product to go through the register seven times in its first hour of introduction for us to deduce what it’s connected to. […] And then the way people handle it helps us understand it from every angle.”

Mashgin pursues a similar approach according to Awalt. The company’s technology likewise recognizes and clearly identifies the object – usually by scanning a barcode – and then takes images in different poses. “We’ll recommend anywhere from 20 to 50 poses, depending on how big your database […] is, how many items look similar to it. Give more data where you have more similarity and that’ll get you up to 99.9% accuracy.” Awalt says new articles were added within a minute. All connected POS systems subsequently have access to this data.